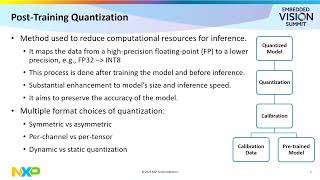

Web Reference: Aug 3, 2022 · Post-training quantization includes general techniques to reduce CPU and hardware accelerator latency, processing, power, and model size with little degradation in model accuracy. 5 days ago · TensorRT supports both post-training quantization (PTQ) and quantization-aware training (QAT) workflows. These workflows enable you to optimize models for low-precision data types. The quantization process uses per-tensor, per-channel, or block-wise scaling. Dec 25, 2024 · Two primary quantization approaches exist: Quantization Aware Training (QAT) and Post-Training Quantization (PTQ). Here, we dive into the tradeoffs of using them for LLM and Rec-sys models.

YouTube Excerpt: ... an integer value that's where the second leg of

Net Worth Profile Overview

8 2 Post Training Quantization Net Worth 2026: Salary, Income & Wealth Net Worth & Biography

Estimated Worth: $81M - $86M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 12, 2026

Net Worth Outlook & Future Earnings

Disclaimer: Disclaimer: Net Worth estimates are based on publicly available data, media reports, and financial analysis. Actual numbers may vary.