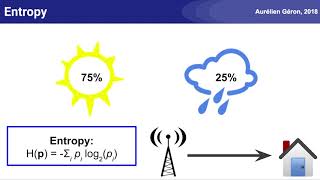

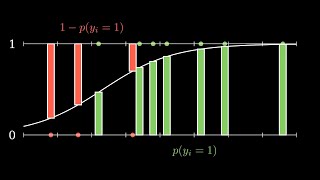

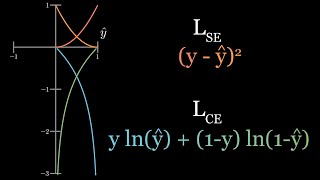

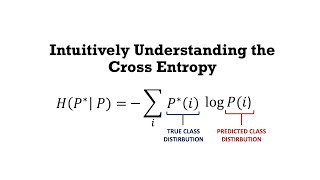

Web Reference: Aug 1, 2025 · The cross-entropy loss is a scalar value that quantifies how far off the model's predictions are from the true labels. For each sample in the dataset, the cross-entropy loss reflects how well the model's prediction matches the true label. The cross entropy arises in classification problems when introducing a logarithm in the guise of the log-likelihood function. This section concerns the estimation of the probabilities of different discrete outcomes. Feb 27, 2026 · Cross-entropy is a popular loss function used in machine learning to measure the performance of a classification model. Namely, it measures the difference between the discovered probability distribution of a classification model and the predicted values.

YouTube Excerpt: In this video, we talk about the

Net Worth Profile Overview

Cross Entropy Explained Net Worth 2026: Salary, Income & Wealth Net Worth & Biography

Estimated Worth: $58M - $90M

Salary & Income Sources

Career Highlights & Achievements

Assets, Properties & Investments

This section covers known assets, real estate holdings, luxury vehicles, and investment portfolios. Data is compiled from public records, financial disclosures, and verified media reports.

Last Updated: April 9, 2026

Net Worth Outlook & Future Earnings

Disclaimer: Disclaimer: Net Worth estimates are based on publicly available data, media reports, and financial analysis. Actual numbers may vary.